You will work within the Interactive Systems group within SCC, Lancaster University. You will have the opportunity to participate in generating cutting-edge research in the exciting field of Human Computer Interaction and interacting with the experts in the field from all over the world to disseminate your research.

For EU/UK Students: Various funding schemes are available for eligible candidates which cover fees, maintenance (stipend) and training/travel costs.

For Overseas: Alternative means of funding (like government scholarships) is encouraged.

Applications are accepted year round. However, an Michealmas term start (October) is expected. For a start in October, applications should be completed before May. Guidance is available for the application procedure to prospective candidates.

A list of sample topics is provided below. Read through and see if something interests you. After this, send me an email for discussing your options further. Alternative topics can be agreed upon through discussion.

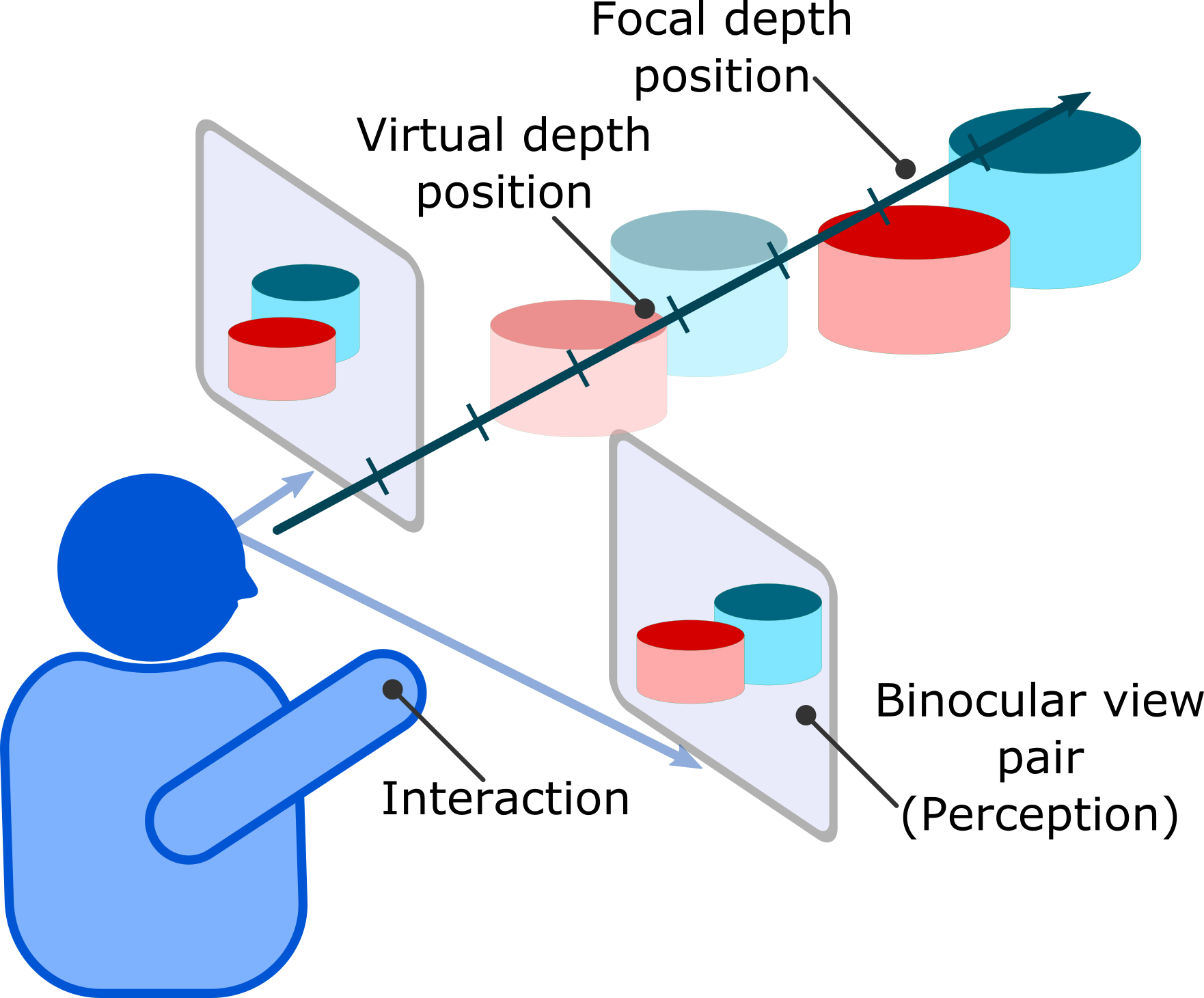

The candidate will investigate perception and interaction related effects during the use of 3D-capable displays. This includes new generation HMDs like the Rift, HoloLens and Vive; as well as other large form-factor 3D displays (flat-panel as well as volumetric). The investigation will require the candidate to create an experimental rig that is compatible with different types of displays as well as tracking systems and use it for user experiments to identify perception/interaction related effects. There is additional opportunity to look into novel display fabrication if the candidate wishes.

The ideal candidate will have experience with one or more of the following: hardware prototyping, understanding of graphics and game-engine based applications and eye-tracking based research.

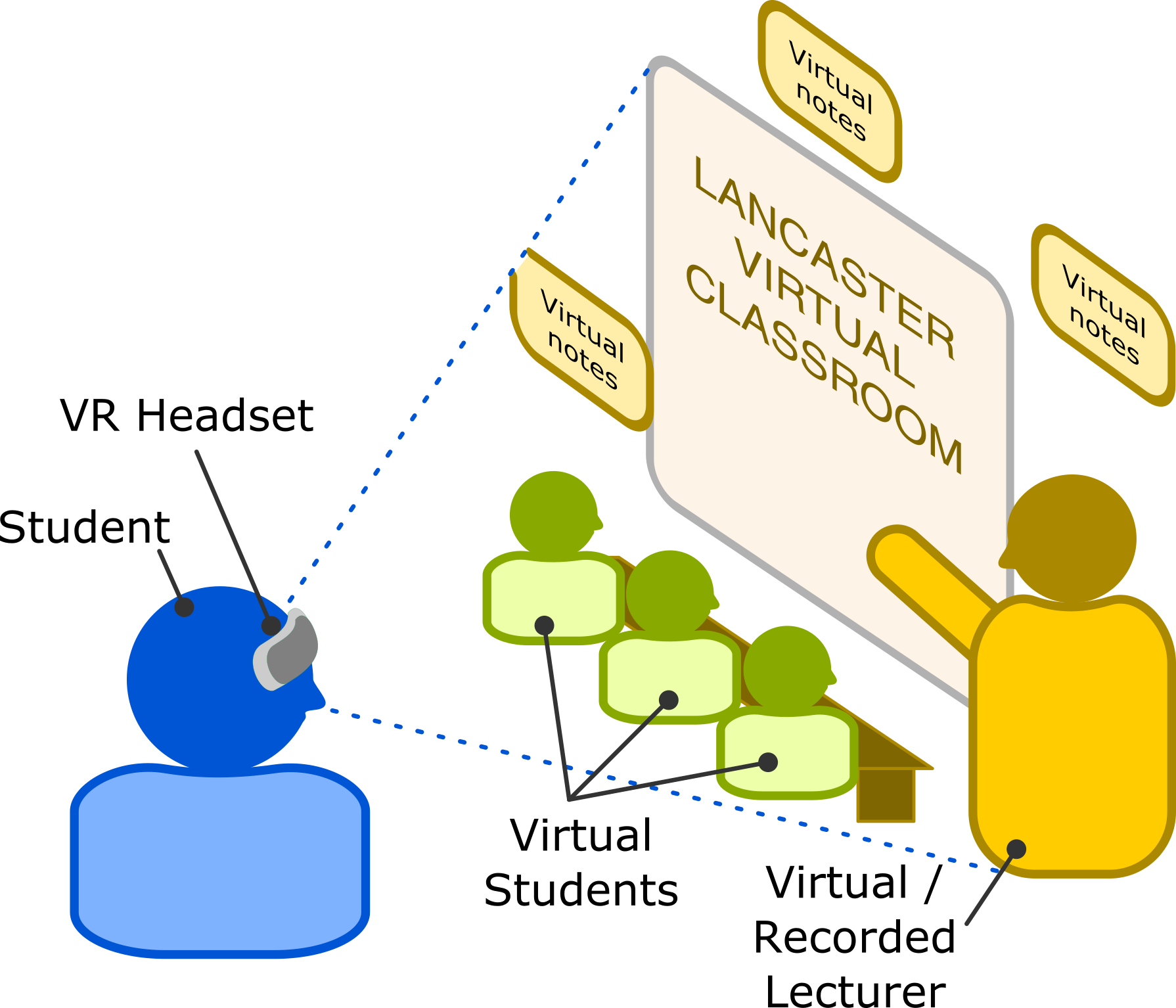

The candidate will investigate the use of commercial state-of-art Virtual Reality (VR) and Augmented Reality (AR) technologies in an educational learning context of Massive Open Online Classrooms (MOOCs) and Computing. The exploration is structured around leveraging VR/AR environmental setups to rethink the delivery of computing concepts and support programming and software development. This can extend into multi-user peer programming/learning scenarios, large classroom VLEs or offer new VR paradigms for VLEs. The main focus is the design of interactions, metaphors and interfaces to support such activities.

The ideal candidate will have experience with programming, understanding of graphics and game-engine based applications in VR/AR. Prior teaching or pedagogical delivery experience is a plus.

The candidate will investigate the use of a two-sided transparent display for collaborative work between two or more users. Normal implementations of such a display lead to lateral inversion of the content so that it is accessible to each user. This leads to contextual mismatch during collaborative tasks such as pointing. The candidate will explore display designs that overcome lateral inversion or mitigate it through alternative means. Further exploration will involve interactions in situated augmented reality wherein one or both users will see their collaborator immersed (collocated) in an augmented environment.

The ideal candidate will have experience with hardware prototyping, understanding of graphics and game-engine based applications and interaction design.

The candidate will investigate interaction with data that takes a physical form (data physicalization) and identify a core set of guidelines that can be used to physicalize data in common contexts, based on actuated modules. This will involve exploration of modularized physicalisations capable of self-assembly. Such modular physicalisations will be able to self-assemble into a static structure or a dynamic structure based on user-specified constraining parameters. This work will identify different forms of sensing to support interaction with modular elements

The ideal candidate will have experience with programming, hardware prototyping and user studies. Prior experience with robotics is a definite plus.