A key element of our research on the Relaciones Geográficas is the analysis of the textual information contained within these sixteenth century reports. To do this, we will be drawing on techniques from Computer Science, namely Natural Language Processing (NLP) and Machine Learning. Whilst these areas have been studied a great deal, the vast majority of this research has been conducted using modern languages, and largely in English.

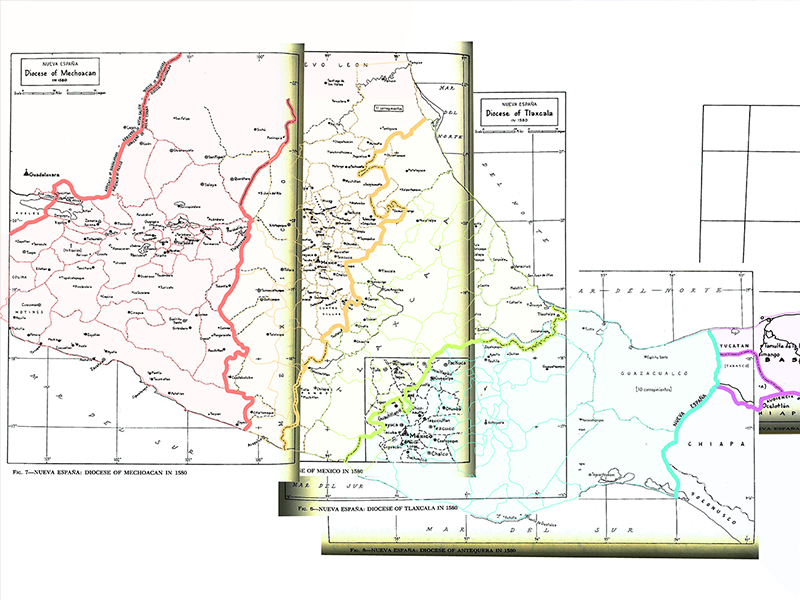

Our corpus is, of course, neither modern nor English. The Relaciones Geográficas that we are currently studying were written in the sixteenth century by Spanish officials and contributed to by indigenous people across Mexico. The mix of Spanish and indigenous languages throughout the Relaciones poses a challenge to these computational methods which have, for the most part, been trained on modern text. We are therefore faced with the task of training our own NLP system which takes into account the unique challenges presented by the Relaciones Geográficas.

Corpus Annotation

We have recently established a partnership with tagtog, an NLP-tech company that has developed an online text annotation tool with the capacity to train models to annotate large quantities of textual information. Tagtog offers a free version which allows a single user to work with up to 100 documents and to make use of its Machine Learning automatic annotation capabilities. For more information on their free and paid plans, head on over to their website.

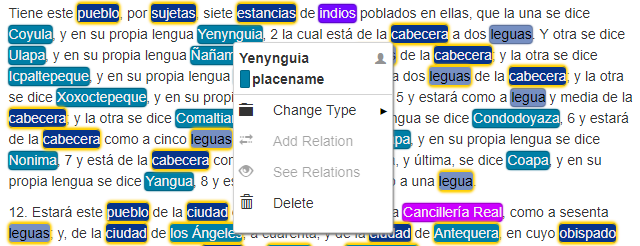

So far, we have been using tagtog to annotate a few excerpts from our corpus. Annotation essentially means assigning metadata about specific terms or phrases in order to train the machine to recognise key words. For example, in the excerpt below, we have tagged “Yenynguia” as a place-name (you may note that this place is also known as Coyula, which can be recorded through the use of dictionaries – as explained later in this post).

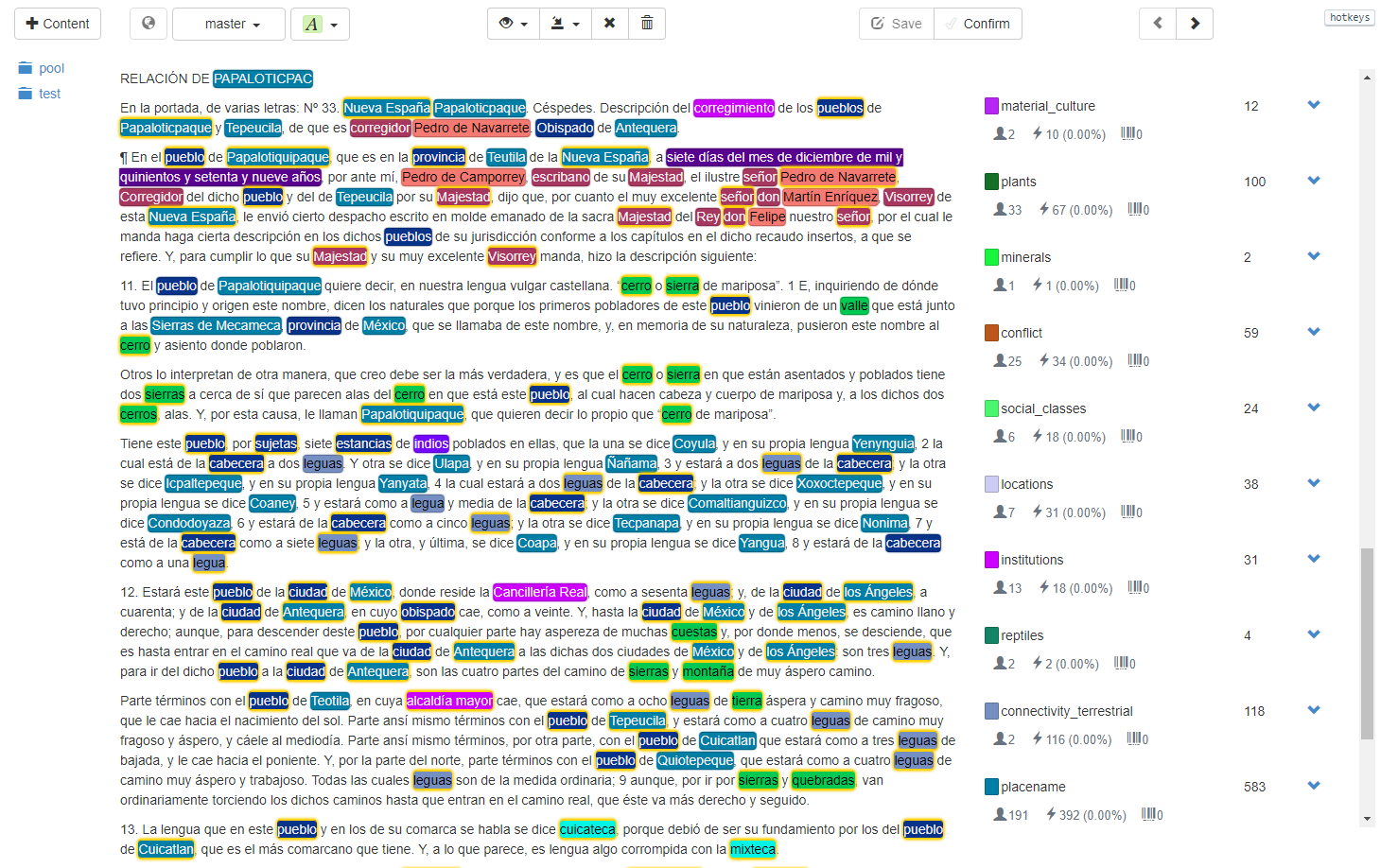

Before annotating anything, it is important to define the types of entity that need identifying within the text. We started with a few key categories (such as place-names, institutions and geographic features), and have since expanded to include around forty categories which reflect the diverse nature of information contained within the Relaciones. Whilst this is a considerable number of categories to be using, tagtog is coping wonderfully so far!

Below is an excerpt from the Relacion de Papaloticpac (Antequera) which gives an indication of the sort of information we have been annotating with tagtog.

Within the first 800 words or so of this Relación, we already have useful information highlighting numerous pueblos in the area and their location in relation to one another, the relevant “ilustre” and “muy excelente” señores involved in the production of this report, as well as some indication of geographic features in the area: cerros, sierras and quebradas. This is all valuable information which we want to be identifiable for textual analysis.

Dictionaries

As mentioned earlier, in cases where we have alternate names for one place (Yenynguia = Coyula), it is possible to use dictionaries to tell the machine that these entities are one and the same. With the inconsistencies of spelling throughout the Relaciones Geográficas, normalization of entities is essential. You may be able to see that, within the first few lines of the excerpt above, we are given three different spellings of the named pueblo. After ‘Papaloticpac’, we have ‘Papaloticpaque’ as well as ‘Papalotiquipaque’. Of course, this is referring to the same place, but the machine needs to be told this explicitly. In tagtog, this is made possible through the use of dictionaries which enable the normalization of entities. So, in the case of ‘Papaloticpac’, we would include each spelling in the ‘dictionary’ as follows:

(note that the all-caps instance of the word also has to be included for the machine to recognise this as a match)

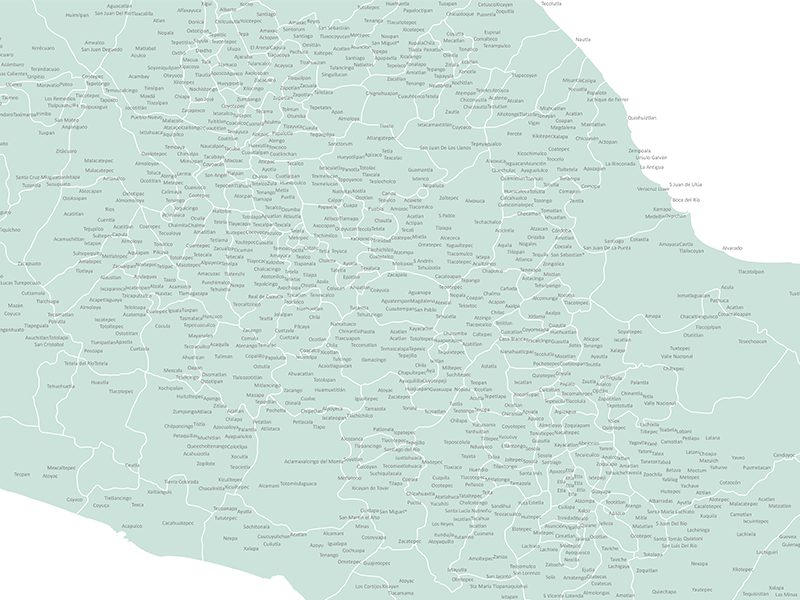

Our next steps, once we have annotated some more of the corpus, will be to train a model using the annotations we have created. To do this, we will import some ‘raw’, un-annotated text, which the machine will annotate automatically given what it has learned from our manual annotations and dictionaries. Of course, this will not produce 100% accuracy. We will proceed to manually correct any errors, repeating this process until we produce a high level of accuracy. Training a model which can accurately produce automatic annotations will enable far more intuitive interaction with our multilingual corpus of over 3 million words.