Alexandre Benedetto - Senior Lecturer, Biomedical and Life Sciences

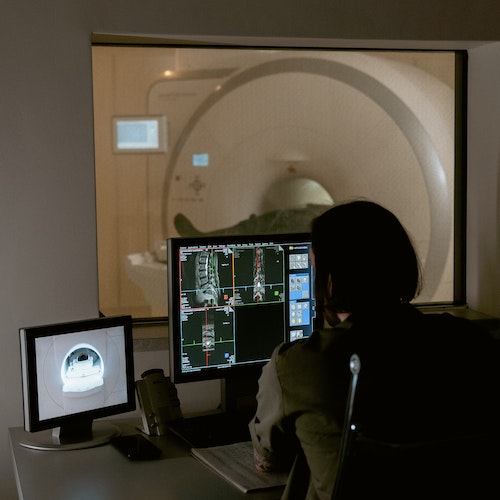

Using open-source machine-learning tools to segment live worms and cells in bioimaging datasets.

Bioimaging modalities have drastically improved in both spatial and temporal resolution over the past two decades, so much so that Bioimaging analysis has become a bottleneck for many projects. To address this challenge, automation is necessary, but object segmentation has been hard to automate due to the inherent lack of homogeneity of biological samples, notably in their presentation and in signal-to-noise ratios. Sample complexity and homogeneity issues make defining adequate thresholds challenging, tripping statistical approaches, leading to poorer segmentation and data loss.

Machine-learning is particularly effective at recognising patterns in noisy datasets and has become the approach of choice to segment objects of interest within bioimaging datasets.

Here, I will present my lab’s efforts to implement two segmentations pipelines on the HEC, in the context of a neuroscience project involving worm and cell activity tracking.

Henry Moss – Lecturer, MARS (Mathematics for AI in Real-world Systems)

Science-Informed Machine Learning for Noisy and Limited Data

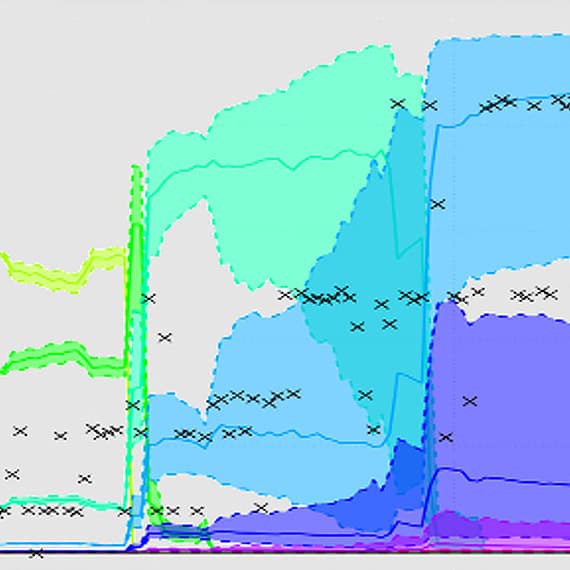

We present a novel framework that integrates scientific knowledge directly into Gaussian process models by embedding known differential equations that describe underlying physical or biological dynamics. This approach enables powerful science-informed machine learning that performs robustly on small, noisy datasets—such as those commonly collected in experimental research—while providing full quantification of uncertainty. We are seeking campus collaborations to apply this methodology to real-world systems.

Georgina Brown, Senior Lecturer in Forensic Linguistics

Into the VoID: An AI-based solution to public trust in voice-data sharing

The voice datasets used in scientific research rarely reflect real-life interactions, particularly those from high-stakes situations such as emergency service calls, anonymous tip-offs, audio-recorded medical appointments, etc. Making these sensitive datasets available for analysis would significantly advance understandings of, and responses to, critical spoken information. However, a key ethical consideration is protecting speaker identities whilst preserving spoken content and nuance. This talk outlines a project proposal to investigate the use of AI-based voice conversion to anonymise recordings, and critically, to evaluate public trust in this approach to responsible voice-data sharing.

Ziwei Wang - Lecturer in Robotics, Engineering

Integrating Gaze-Based Control and Haptic Teleoperation for Enhanced Human-Robot Collaboration in Diverse Environments

Abstract: Complementary control paradigms will be presented to advance intuitive human-robot collaboration (HRC) across diverse applications, including extreme environments, assistive technologies, industrial settings, and sterile medical operations. We present two distinct approaches to hands-free interaction and teleoperation: (1) a gaze-based system comparing location-based control (spatial mapping via fiducial markers) and velocity-based control (joystick-like directional input), both integrated with blink detection for gripper activation, and (2) a haptic teleoperation framework enabling complex manipulation through manual, semi-autonomous, and fully autonomous modes. The gaze-based system was evaluated using pick-and-place tasks, with performance metrics (task completion time, trajectory efficiency, error rates) and subjective workload (NASA-TLX) revealing significant differences between control paradigms. The haptic system, validated in lunar sample collection and surgical peg-transfer tasks, incorporates bio-monitoring for operator mental load estimation, holographic interfaces for usability, and haptic feedback for transparency. Both systems emphasize adaptability, with the haptic framework demonstrating transferability to alternative interfaces (e.g., foot control), while gaze-based results inform designs for intuitive spatial and velocity mappings. Together, these studies highlight the critical role of multimodal interaction—combining gaze, haptics, and autonomy—in optimizing HRC. Findings provide design guidelines for context-aware interfaces that balance user cognitive load, operational efficiency, and environmental demands, paving the way for robust robotic assistance in settings requiring precision, sterility, or hands-free operation.

Des Fagan - Professor of Computational Architecture, School of Arts

Working with the architects of Eden Morecambe, our project explores the use of Machine Learning (ML) to generate structural and carbon surrogates from images of seashells, reducing carbon in the construction of gridshell buildings. ML enables us to reverse-engineer those forms from photographs to reconstruct them parametrically in Grasshopper (Rhino3D) software. The resulting digital twin is subjected to structural and carbon evaluation using surrogate models, allowing us to test how morphological changes - such as compression, elongation, or scaling affect the shell’s efficiency as a building. This approach transforms found seashells into generative, low-carbon design tools.

Edmund Colville - PhD Student, Physics

Self-Supervised Learning for Auroral Morphology Identification

Features in Earth's auroral oval provide crucial insight into dynamic processes in Earth's magnetosphere which are otherwise difficult to observe. These structures, although 10s to 100s of kilometres in size, occur somewhat rarely, and in datasets which span years. Self-supervised learning techniques can be used to identify similarities between images of auroral features without the need for a large set of classified observations.

Yingnian Tao SRA, Psychology & Mark Ryan, Postgraduate Researcher Marketing

Institutional policies on Generative AI in education across UK universities

Summary: Mapping institutional policy guidelines on GenAI use in teaching, learning, assessment in UK higher education institutions (75 universities); critically evaluating the underlying ideologies shaping AI governance in HE.

Poster displays – Des Fagen, Dawid Lipinski, David Parkes, Mengyi Gong, Charles Weir, Rory Yeung, Manoj Roy, Thomas Swarbrick and Shyamli Suneesh.